Recently, data engineering and management have grown difficult for companies building modern applications. There is one leading reason—lack of multimodal data support.

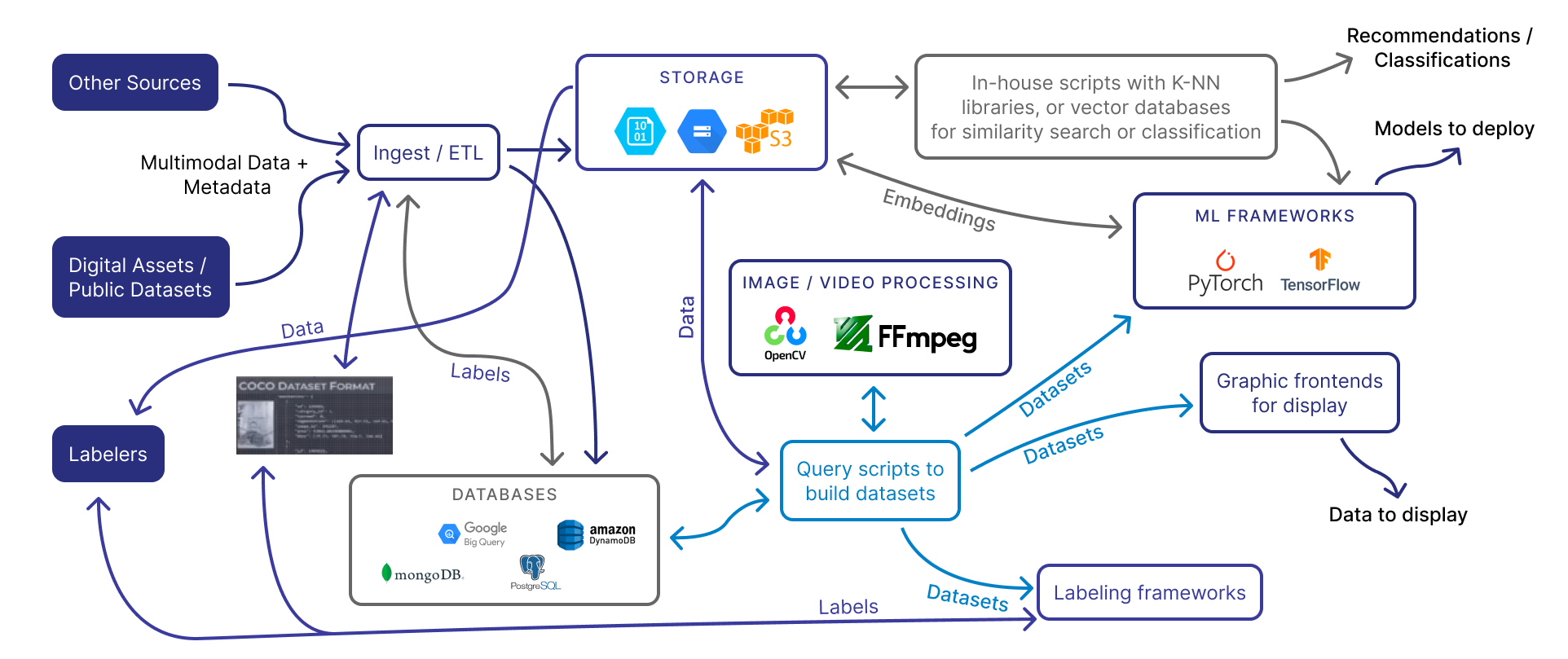

Today, application data—especially for AI-driven applications—includes text data, image data, audio data, video data, and sometimes complex hierarchical data. While each of these data types can be efficiently processed individually, together they create an architectural cobweb that any spider would be ashamed of:

This mess is the consequence of a few problems with multimodal data. The first is the lack of a unified data store; different data types are saved in and accessed from different databases or storage locations, each optimized for the data type’s profile. The second issue is that multimodal data needs to be pushed through sprawling data pipelines to merge and display data. Finally, given the complexity of the underlying architecture, these data systems struggle with avoiding duplicates and deleting connected data. All of these issues make collaboration difficult for both engineering and data science teams.

This calls for a purpose-built database that is created to reduce diagrams like the above. Metaphorically, a database tuned for multimodal use cases can act as a plunger to these clogged data pipelines. With the right implementation, it can save companies lots of headaches and wasted engineering time.

A Core Theme

The job of data engineering has become more complex in recent years for a few reasons. The primary cause is the growing demands of AI—both from a consumer standpoint and a technical standpoint. Today, AI-driven applications don’t utilize a single model. They are using AI models for text, for images, for videos, and even arbitrary file types.

Given the explosion of technologies across space, coupled with the general lack of purpose-built tools, data engineering recently has been stringing together spaghetti-like architecture just for an application to get a single job done. These issues carry into data management rolls like data science, where dealing with duplicated or misaligned data is common.

We can witness these problems as we canvas through some spaces where AI has gotten especially popular.

Multimodal Data—An Industry Breakdown

Multimodal data might be an emerging buzzword, but it has real applications.

E-Commerce

One of the biggest users of multimodal data is e-commerce companies.

Today, product listings almost always involve two types of data—text (titles, descriptions, labels) and images (product shots). On occasion, they may also feature a video or a 3D spatial demonstration.

A revenue-driving feature of any e-commerce website is suggestions of similar products to browsing customers. You’re buying a brown fedora? Here are other antique hats to consider.

Previously, supporting this feature was simple; e-commerce companies had manageable product lists where products could be manually tagged to aid applications in making good recommendations. Today, however, accurate tagging has become difficult for two reasons: (i) product inventory is sometimes managed by third-party sellers, and (ii) product lists have grown massive. For instance, Wayfair has over 14M items listed for sale, and it’s hardly the biggest.

To address this, e-commerce companies have built data teams that leverage AI to automatically recommend similar items—explored in detail in another piece. At first, teams would use AI to suggest tags, with approval left to a human. However, today, AI can directly compare the likeness of two products via distance algorithms like kNN or cosine similarity.

But there is an issue with that approach—it mandates complex architecture.

Retail

Retail companies might face the same challenges as e-commerce companies with their respective online storefronts, but they have a unique additional problem—shopper optimization, that is, algorithmically compelling shoppers to buy more.

It might sound a bit like Black Mirror, but modern retailers use camera footage to record and analyze shopper movements. This was made possible by modern computer vision, where auto-generated time-based events could accurately map a shopper’s journey. Today, stores can reorganize shelves to maximize product discovery—and, by extension, purchases. Then, without any shift in staffing costs, stores can drive more revenue per location.

If this sounds like a hard problem, rest assured . . . it is. It requires you to combine complex numeric data (purchases) with video data (cameras) and structured, locational data (products on coordinates of aisles and shelves). But with all of this data pushed through a multimodal analysis, stores can make profitable adjustments.

Of course, there is a lingering issue. This, again, requires complex architecture.

Industrial and Visual Inspection

Factories typically have a long list of compliance requirements to operate safely. These range from protecting workers (e.g. enforcing that workers are wearing protective equipment like hard hats or goggles) to protecting consumers (e.g. ensuring products are being manufactured to design).

Using cameras and microphones, factories can pull in helpful data to enforce these compliance needs without dramatically growing management. By using multi-modal AI, managers can be pinged whenever a device, product, or worker is operating out of code. This can prevent machines from breaking, minimize general maintenance costs, and—most importantly—protect the health of workers and consumers.

However, this is a tough problem — explored in detail in another piece. For AI to effectively work without massive false positives and negatives, it needs to be trained on both audio and video data of factory machines, many of which are niche and unique to the product that’s being manufactured. Like the previous problems, it requires complex architecture to work.

Medical and Life Science

With the advent of successful ImageNet came an explosion of companies attempting to improve medical diagnoses via AI. So far, we’ve mostly seen success in this field for problems that involve a single diagnostic test. For instance, AI can successfully detect skin cancer since the data is strictly just images of skin. However, most medical diagnoses involve different modalities of data. That might involve mixing blood reports, CT scans, genetic tests, X-ray imaging, ultrasound imaging, and other data to make a diagnosis.

Using multimodal analysis, the software can aid doctors in their considerations for even complex conditions. But this involves stringing together multiple models, involving many pipelines—all with HIPAA-compliant constraints. Architecture, once again, becomes a challenge.

Similarly, life science and biotech fields have been using the same techniques to improve drug discovery and aid pharmaceutical research. These involve the same data types and pipelines as medical data; generally speaking, the modern medical industry depends on solving multi-modal data problems.

Other Verticals

The list of verticals that can benefit from multimodal data and their problems is fairly long. It’s the natural consequence of more and more applications tackling problems that combine text with image, video, and arbitrary JSON or XML data.

How To Support Multimodal Data

The Core Requirements

There are a number of subproblems that any multimodal data application needs to account for, particularly if it takes a do-it-yourself(DIY) approach.

The first is storage. Data needs to be stored in a place that is easy to retrieve and index from. Storage is easy when data is strictly text or hierarchal (e.g. JSON), where an OLTP database like Postgres or a document database like MongoDB could be used. However, when images, videos, and other larger files are involved, conventional databases fall apart—that is, without the help of external cloud buckets. This creates a new challenge—linking data between multiple locations, where naming becomes paramount.

For AI-driven applications, data needs to be fed through AI models, where additional metadata (a.k.a. embeddings, application-specific attributes) are generated for future indexing. And, if data is intended for training, annotations need to be created and stored.

Additionally, applications often need to display previews for any listed entry. But that can be expensive for systems if the image or video files are large; accordingly, thumbnails need to be generated and easily retrieved. However, becomes many processes require thumbnail creation, it’s easy to create duplicates. And, unfortunately, the presence of duplicates potentially saturates model training.

Finally, there are processes like similarity search, where data is retrieved, ranked, and dispatched to frontend applications. This can involve more code imported from third-party libraries.

Each of these discrete parts can experience an outage. Accordingly, they need to be properly built and managed.

The Halfway Solutions For Multimodal Data

There are plenty of databases that offer support for some subproblems faced by multimodal data but fall critically short in others.

The first category is key-value and document databases such as Redis, Cassandra, and MongoDB. These databases have fantastic search capabilities for JSON-blob entries and even some support for storing images. But those features were added as extensions, not designed as a first-order problem, and they therefore lack core abilities like preprocessing images and videos for downstream usages. Additionally, these databases break when handling large files.

The second category would be graph databases such as Neo4j and MarkLogic. These databases are fantastic at establishing connections (e.g., linking data to metadata) but fall flat with the same problems as document databases. They are not built for storing or preprocessing larger files of data; instead, they expect that data to be siloed to storage solutions like S3.

The third category would be time-series databases such as InfluxDB or Timescale, where data from multiple sensors and modalities is supported—but not the relationships between those modalities. This makes them unsuitable for any application needing multimodal analysis.

Traditionally, multi-model databases like ArangoDB, or Azure Cosmos DB combine different types of database models into one integrated database engine. Such databases can accommodate various data models including relational, object-oriented, key-value, wide-column, document, and graph models. These databases can perform most of the basic operations performed by other databases such as storing data, indexing, and querying. However, they completely lack support for storing or preprocessing larger files of unstructured data; again leading to siloed storage solutions.

Vector databases have recently been getting increased recognition due to the role they can play for LLMs and semantic search. Vector databases store and retrieve large volumes of data as n-dimensional vectors, in a multi-dimensional space that ultimately enables vector search which is what AI processes use to find similar data. However, the ability to allow filtering by complex metadata and accessing data itself is extremely limited which leaves the option of carrying the vector’s unique ID around for reference when getting to the actual data.

The final category would be one of the biggest topics in the last decade: warehouse solutions such as Snowflake and Redshift. These data warehouses enable data teams to analyze their data but in a read-only capacity. They aren’t designed to be production databases for data that is queried, processed, and delivered to front-end applications.

Data Catalogs, A Partial Solution

Data catalogs integrate with data stores as opposed to being primary data stores themselves, making them an intermediary solution to the spaghetti-like mess of modern architecture. Data catalogs, such as Secoda, unify data into a single location for humans to search. It’s the digital analog to inventory management.

While data catalogs are helpful for data teams to visualize and discover data, answering case-by-case queries when needed, they do not reduce architectural complexity. They are frontends for humans to query but not built to address data-centric AI functions.

The Solution: A Purpose-Built Database for Multimodal AI

A database for multimodal AI is quite similar to a multi-model database. It needs to support different modalities of data but not necessarily with the intention of supporting different database models. It is to allow unifying and preparing the various data types that feed into multimodal AI models or are required for analytics in supporting the use cases listed above. As identified above, the need to store metadata, unstructured data, and support data processing makes it more like a parallel fork and extension of multi-model databases but with specializations for analytics rather than for the purpose of simply supporting different database data models.

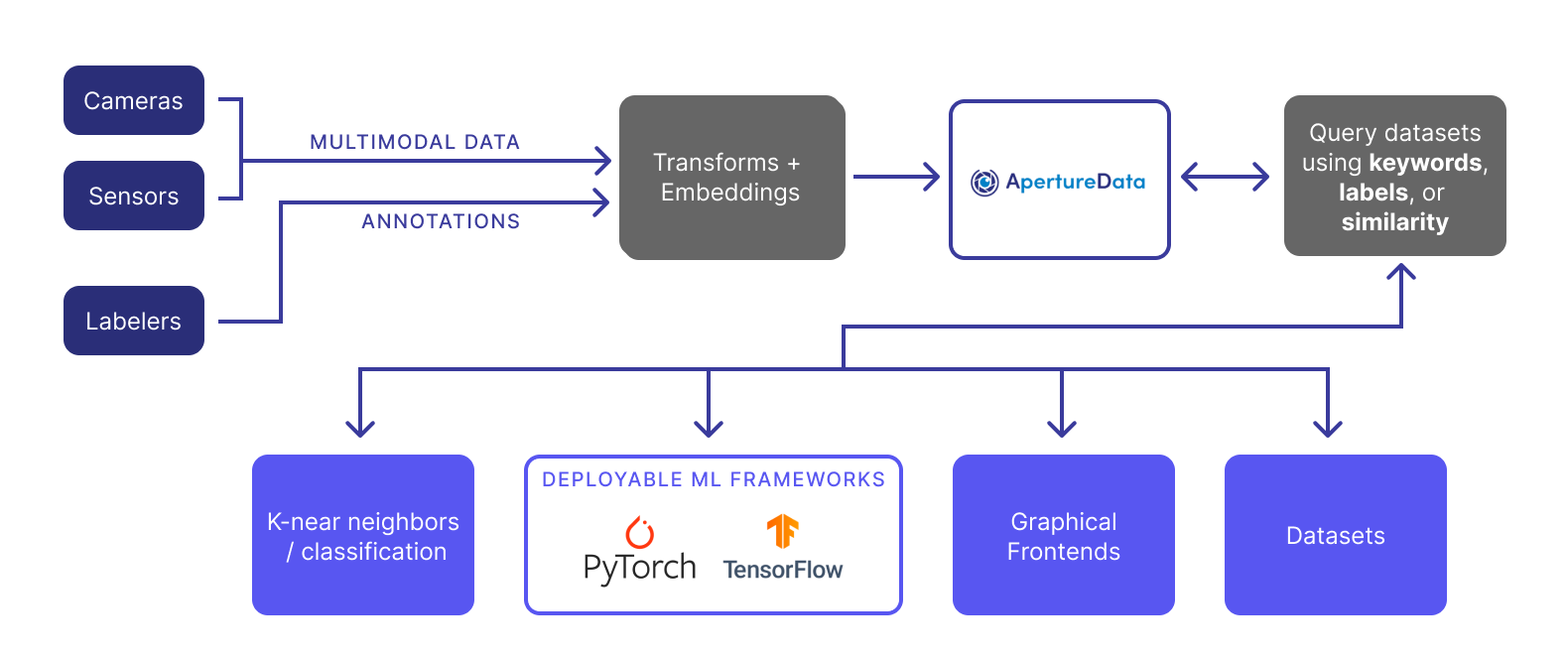

For example, ApertureDB supports a bastion of features for storing multiple types of data. It works with text, can seamlessly preprocess images and videos, and supports arbitrary JSON blobs. This dramatically reduces the amount of external transformation that data needs to undergo before it’s ingested or consumed; it also sharply minimizes the number of data locations.

A purpose-built database for multimodal data fixes the architectural complexity faced by all aforementioned verticals. At the same time, it also improves the performance of multimodal AI by unifying the various data types into a single place with no external plumbing required from users while integrating seamlessly within machine learning pipelines and analytics applications. In simple terms, it is an all-in-one solution for indexing and high-dimensional data management.

Multimodal databases can also be the primary storage location queried by production applications.

Closing Thoughts

As the world inches toward multimodal data-driven applications—especially with the advent of generally available artificial intelligence—a purpose-built multimodal database becomes more and more important. A multimodal database can dramatically simplify the underlying architecture, improve performance, and provide an all-in-one solution for high-dimensional indexing. It reduces the engineering time spent on managing complex data pipelines and data science time spent maintaining them. In a nutshell, it enables companies to focus more on their value proposition, not these headache-inducing stack diagrams for organizing and consuming this wide variety of rich data.

If you are interested in learning more about how a multi-modal database works—including the set-up costs—consider reaching out to us at team@aperturedata.io. We are building an industry-leading database for multi-modal AI to simplify the aforementioned problems. Additionally, stay informed about our journey and learn more about the components mentioned above by subscribing to our blog.

I want to acknowledge the insights and valuable edits from Mathew Pregasen and Luis Remis.