Author: Dr

Author: Dr. Sangwoo Shim

We are living in the age of artificial intelligence (AI), a technology that has made its way into every industry and is advancing at an unprecedented pace.

Epitomizing the innovations in AI is the hyperscale AI model. The number of parameters, which serves as an indicator of the scale of machine learning (ML) models, doubled in just a year from the 50 million of Google’s Transformer in 2017 to the 100 million of OpenAI’s GPT in 2018. The number of parameters of AI models, which used to multiply tenfold each year, reached 170 billion with GPT-3 in 2020 and 1.6 trillion with Google’s Switch Transformer in 2021, heralding the dawn of hyperscale AI computing.

Hyperscale AI models can be put to multiple applications. These models, in other words, are multipurpose. Unlike the conventional ML models, which need to be taught how to solve problems, these hyperscale models can find solutions on their own as long as they are given clear explanations of the problems they are to tackle. It is this ability that allows these models to paint pictures and compose music that rival those of human artists. The massive amounts of effort and resources poured into processing trillions of parameters are justified by the multipurpose utility of these models.

10,000 ML Models for 10,000 Manufacturers

When we look at all industries, especially the manufacturing industry, the infinite potential of AI is difficult to grasp. Deloitte’s survey of AI applications in manufacturing reports that the success rate of AI projects for manufacturing companies remains low at nine percent.

A major reason for this low rate of success can be found in the wide diversity of the problems and data of the many different manufacturing companies. There is no standard model or methodology of data collection and processing among these companies, nor are there adequate ML models that can be referenced. Any manufacturer interested in adopting AI is thus forced to have a customized model tailored to the particular conditions and tasks it faces.

For consumer AI, a single monolithic model—even if it is not a general-purpose, hyperscale model—can serve hundreds of millions of users. Examples include AI-based translation services and shopping recommendation algorithms used by e-commerce platforms. An AI model developed for a single manufacturer, however, can be used for only that particular company.

Consider the example of defect detection AI models—perhaps the most popular kind in manufacturing today. Both a company manufacturing automotive cylinders and another company manufacturing smartphone displays need defect detection solutions to ensure the quality of their products. For those tasked with developing AI solutions for these companies, however, the resulting models are vastly different in every aspect, from the types of data required to the criteria used to define defects. A state-of-the-art AI model developed to detect defects in automotive cylinders simply cannot be used to detect defects in smartphone displays.

Andrew Ng, an American computer scientist and ML expert, thus concludes: “In consumer Internet software, we could train a handful of machine-learning models to serve a billion users. In manufacturing, you might have 10,000 manufacturers building 10,000 custom AI models.”1

1 Eliza Strickland (2022), “Andrew Ng: Unbiggen AI,” IEEE Spectrum (February 9, 2022) (retrieved from https://spectrum.ieee.org/andrew-ng-data-centric-ai).

Manufacturers also frequently alter their processes and production line structures and replace the parts involved in the production of finished goods. All these changes mean that the basic data fed into the AI model need to be changed as well, requiring the model to repeat its learning processes. In some cases, an entirely different AI model may be needed.

In addition to design and development, the deployment and operation of a given AI/ML model present challenges of their own. An AI/ML model developed for manufacturing needs to be adapted to suit the particular client’s manufacturing environment. These environments, however, vary widely from company to company. This is what sets AI models for manufacturing apart from AI models developed exclusively for digital services. The conditions into which a manufacturing AI model is deployed, in other words, can be unpredictably different from the conditions in which it was developed. From an operational standpoint, a model must be retrained on new data after some time; and, more frequently than expected, the changes to the data may be so significant that a new model must be developed.

OODA Loop: Rapid iteration for better results

To be successful with industrial AI, we must approach the ML lifecycle from a different perspective. On the surface, the cycle seems to be a linear process, proceeding from the problem definition stage to the collection and analysis of the necessary data, the development of a suitable ML model, and deployment. The actual process, however, is rarely so neat, with each step having to be repeated until it produces the successful result that is necessary for the next step to take place. It is commonplace for ML engineers to return to the preceding stage, or even the stage before that, due to a problem occurring at different stages of the ML lifecycle.

The most common case is for an ML model to be successfully developed and deployed into the production environment only for it to fail to operate as expected. This is due to the aforementioned differences between the development and deployment environments. In this case, the ML engineer must return to the modeling stage to synchronize the two environments. However, even returning to modeling may not be enough to solve the problem, necessitating a further regression back to data collection or analysis or the revision of the entire data collection or analysis method. In some cases, ML engineers may have to return to the very beginning, where the problem itself needs to be redefined.

In other words, the AI/ML lifecycle is a process of improving a model or application by exploring different possibilities through iterations of each stage of the lifecycle. Given the unpredictability of the variables involved, it makes sense to find ways to accelerate the necessary iterations instead of perfecting the quality of each step of the loop through enormous investments of time and effort.

The observe-orient-decision-act (OODA) loop strategy is such a method for accelerating the requisite iterations so as to obtain the desired results. The OODA loop is actually a military concept developed by U.S. Air Force Colonel John Boyd, who found inspiration for the concept in his observation and analysis of dogfights between U.S. and USSR fighter jets during the Korean War. In essence, the OODA loop holds that the greater the speed with which one side completes its loop of decision-making and execution in response to the enemy’s movements, the greater that side’s chances of winning. In other words, the speed of iteration matters more than the quality of iteration. Boyd himself successfully applied this doctrine to become an undefeated victor in mock dogfights.

When applied to AI development, the OODA loop strategy means that, the faster we can iterate the ML lifecycle of problem definition, data collection and analysis, modeling, and deployment, the greater the chances of success for the AI project.

MLOps: the Prerequisite for Accelerating the OODA Loop

What, then, is needed to accelerate the completion of each ML lifecycle?

ML models lie at the heart of AI, but applying models to an actual business environment is a demanding process that requires a multiplicity of factors in order to be successful. A successful ML model requires not just the collection, verification, and processing of data but also multiple tests and debugging runs, infrastructure capable of hosting the given model as well as the ongoing monitoring thereof, and integrated management of resources and data. Any of these elements could go awry without forewarning, rendering the whole model ineffective.

Recall that ML models for manufacturing are often developed and applied in vastly different conditions. Data scientists and ML engineers typically handle the development phase, including problem definition, data collection, and modeling. Software engineers then install and operate the final model. Development and deployment, however, involve not only different working conditions but also different modes of thinking. The separation of these two phases thus slows the OODA loop process.

What matters as much as developing a good model is therefore establishing a system that ensures the effective deployment and use of that model.

This explains the growing appeal of MLOps today. MLOps involves establishing a comprehensive system that ensures smooth transition between the steps of the ML lifecycle by enabling prompt responses and troubleshooting in any part of the loop, including not just model development but also the software engineering components involved in implementing applications from the models and operating them in actual production environments.

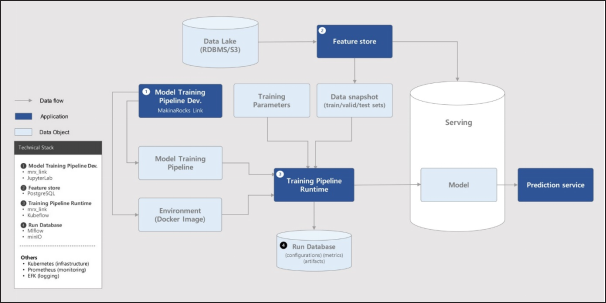

The key to MLOps is bridging machine learning (ML) and operations (ops). The disconnect between these two areas presents a grave challenge to the model’s ability to learn new data with speed. Through its training pipeline, MLOps delivers data to the software that generates the model. Those tasked with operating a given ML model can therefore generate a new model by simply and directly entering new data into the pipeline without having to go through the ML engineer. MLOps ensures that the data fed into the pipeline comes from the same source and thus has the same format and attributes.

MLOps, moreover, ensures that the software developed as part of the ML model can continue to function in actual operations. Although all IT solutions must face the discrepancy between development and operation environments, the gap becomes especially detrimental when it concerns AI/ML models because the data scientists and operating engineers involved think fundamentally differently. One solution to this problem is to deliver an automatically tested and verified training pipeline, which is the central feature of all MLOps platforms.

MLOps Specialized for Industrial AI

Through the experience it has gained in diverse AI projects in a wide range of manufacturing fields, including the semiconductor, energy, and automotive industries, MakinaRocks has come to recognize the need for an MLOps platform that is tailored to AI solutions for industrial and manufacturing sites. Runway™ is the culmination of the experience and expertise that MakinaRocks has gained over the years.

Industrial AI models are customized to suit the specific circumstances and characteristics of the given manufacturers. As there are no uniform standards governing the quality and scale of the data generated by these manufacturers or the environments in which the models are to be built, customization is a requirement when it comes to manufacturing clients.

MakinaRocks’ Runway™ provides flexibility in all stages of the ML lifecycle so as to ensure timely responses to the widely-varying datasets and problems that can emerge in industrial environments. It has also been designed to ensure the seamless operation of ML models and provide standardized environments for the development, deployment, and operation of ML models, thus guaranteeing rapid iteration throughout the ML lifecycle.

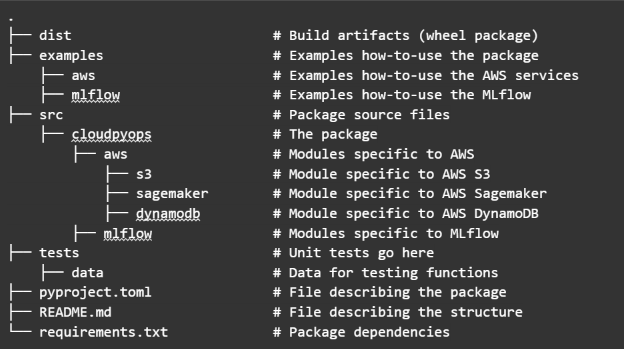

Integrating Link™, another MakinaRocks invention, and a Jupyter-based ML modeling tool, into Runway™ enables users to develop high-performance ML models using diverse datasets with a variety of libraries and frameworks. Moreover, the pipelines for retraining are generated immediately after the models are developed.

Furthermore, Runway™ offers a broad range of features that help resolve the chronic issues associated with operating AI models in manufacturing, including synchronization of the development environment and the operations environment and providing direct access to deployed models for retraining and debugging.

An MLOps Use Case in Anomaly Detection

AI/ML has a broad array of industrial applications. The most well-known ones include anomaly detection, intelligent control and optimization, and predictive analytics. Through its participation in a large number of AI projects involving various Korean manufacturing companies and companies in other industries, MakinaRocks has built wide-ranging references for automated chip design, anomaly detection in industrial robot arms, anomaly detection for semiconductor facilities, detection of future emergency shutdowns of petrochemical reactors, remaining useful life prediction for EV batteries, solar power generation prediction, and optimization of EV energy management system controls.

Using MakinaRocks’s MLOps platform to detect laser drill anomalies is a compelling example of MakinaRocks’ use of the platform in real-world situations. In semiconductor manufacturing, laser drills are crucial tools. The client company was operating dozens of laser drills with identical specifications. Nonetheless, different AI models needed to be developed and deployed separately as each drill was operated with different core parts and parameters and produced a unique distribution of data. Moreover, any interruption in the operation of just one laser drill led to the shutdown of the entire production line. These problems added to the urgency of adopting a comprehensive and more effective AI solution.

In manufacturing, normal data is easy to collect, while collecting data on anomalies is notoriously more difficult. MakinaRocks’ solution thus featured an autoencoder model capable of compressing, restoring, and training with normal data. The resulting model was designed to detect and identify signs of forthcoming interruptions with a high level of precision based on semi-supervised and continual learning. The model thus informed facility maintenance staff of these signs one month in advance so that they could maintain timely upkeep.

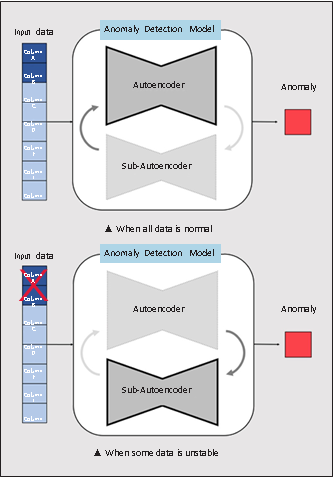

The defining achievement of this project is the design of a model capable of providing uninterrupted inferences in the absence of the guaranteed availability of relatively less important data. The previous models in place were unable to produce inference results when only some of the multiple sensors installed on the laser drills failed. Such frequent interruptions prevented continuous monitoring and inferences and resulted in the client’s dissatisfaction with the given AI solution. Figure 4┃Model Design Capable of Coping with Unstable Data

MakinaRocks’ solution was able to maintain its inference performance by training an additional model with data that excluded the unstable sections. As Figure 4 shows, the solution actually involved two models: the main model, applying its autoencoder to normal data, and the replacement model, applying a sub-autoencoder to the less important data with fluctuating availability. The solution alternated between these two models depending on the availability of data. The streaming-serving and model-shadowing features of Runway™, in other words, enabled the solution to continue to monitor the laser drills and detect anomalies notwithstanding trouble in some of the sensors.

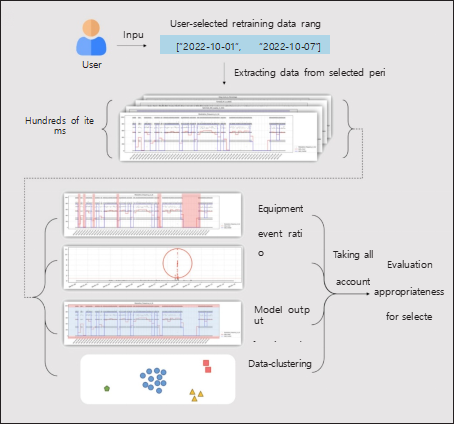

Furthermore, MakinaRocks’ solution also included an algorithm to determine the appropriateness of datasets for retraining, enabling general users of the solution to retrain ML models with the right data without the help of data scientists or ML engineers. During a major event, such as the replacement of a core part, the distribution of data under observation changes significantly, necessitating that the ML model be retrained with the new data. For lay users who lack data analysis skills, however, it is difficult to choose what data should be fed into the model for retraining.

The user-friendly user interface and dashboard feature of Runway™ support general users’ decision-making based on multiple variables. This particular algorithm considers a variety of factors simultaneously, including the ratio of events involving the given core part as saved on the dashboard, ratio of objective anomaly data included, anomaly-detecting algorithm based on the scope of data, and data-clustering algorithm. As a result, general users can train and operate the AI model themselves, even during major events, without the help of data scientists or ML engineers.

Introducing AI That Solves the “Real Problems” of Industries

Operations is ultimately where an AI project comes to completion. An ML model boasting the most advanced design would not have much value if it failed to operate in the target environment. Models that fail to retrain themselves without interruption are also of little use. MLOps thus holds the key to the success of AI projects.

MLOps is a broad-ranging concept that spans design and operations alike. The definition of an MLOps solution thus varies from company to company. A company with sufficient data science capacity should ideally adopt a bespoke MLOps that allows freedom in ML modeling based on coding flexibility, in the form of a platform-as-a-service (PaaS). Another company that lacks such development capacity and needs an MLOps platform that supports citizen data scientists would find an MLOps solution-as-a-service (SaaS) product more useful. For the majority of companies, however, the choice is not always so clearcut.

Sector | Objectives | Issues/challenges | Runway™ effects |

Tech | Focus on project-based model development.•Deploy 50+ ML models. | Shortage of manpower capable of developing open source-based MLOps6+ months needed for deployment | Reduced period required for deployment from six months to four weeks (reduction of about 80%).Reduced requisite manpower for developing MLOps environments by about 50%. |

Manufacturing | Introduce an ML-based anomaly detection solution in a semiconductor production facility. | Lack of trained data scientistsLack of capabilities to operate/ manage high-performance ML models | Provided intuitive UI/UX to support citizen data scientists.Enabled users to retrain and deploy models without requiring extensive experience with ML. |

Energy | Improve the solar power generation prediction model for 600+ solar power plants. | Rising cost of model maintenance due to lack of synchronization between development and deployment environments | Simplified debugging by using a built- in model development platform.Accelerated the scaling-out of the identical model. |

MLOps solutions should be flexible so that they can accommodate the different concerns and business focuses of different clients. Some clients want a solution that can be interfaced with diverse legacy systems. Some want a solution whose training and operating environments are kept completely separate in order to maximize the efficiency of resource use. As industries require high-performance AI solutions, it is also crucial to ensure that the solution, developed through countless hours of experimentation and analysis by experts, can continue to perform optimally when applied to actual industrial situations. The operating process, too, should reflect the distinct business logic of the client company.

Equipped with extensive experience in AI development and application, MakinaRocks has created an MLOps platform that is capable of meeting such diverse needs of industries. Going forward, the company will continue to lower the barriers to industrial AI solutions and help establish AI standards that can solve the real problems of industries.

.png)