According to Gartner by 2025, more than 50% of enterprise-critical data will be created and processed outside the data center or cloud

According to Gartner by 2025, more than 50% of enterprise-critical data will be created and processed outside the data center or cloud. As we start to think about all the possibilities for AI and Machine Learning projects this will generate, we have to remember that only 50% of AI initiatives reach an operational state (Production) within the enterprise. Not only that. Out of that 50% that only 10% produce meaningful ROI.

This is a crucial time to establish best practices and put in place processes, and solutions to help make the ML models work more effectively by getting the models into production. This is especially important right now as the capabilities and potential of data are accelerating innovation through LLMs, Computer Vision, and AI at the Edge. Specifically Edge devices will be generating the data created outside of the cloud at the beginning of this post. Let’s look at some challenges Edge AI deployments face and best practices to overcome current assumptions and perceptions.

Challenge 1: Managing data Generated Outside the Cloud

Assumptions/Perception

Data generated outside the cloud is another source to improve models I train and run inside my cloud

- Edge is characterized by dynamic and unpredictable environments, where the quality and quantity of data may vary significantly over time, making it difficult to maintain the performance of the deployed models and could also lead to model bias in model development.

Edge is another “cloud” to deploy models and run inference

- Edge has limited resources such as memory, processing power, and energy, which constrain the size and complexity of the models that can be deployed.

- This creates the need for central management of models that are deployed in various environments.

Edge has limited use cases for my industry

- Edge is not only IoT devices. It encompasses anything that isn’t your current data center (so including not just on-device but also local data centers and other cloud points of presence). Through this, the potential for Edge AI use cases is much greater than the perception.

Best Practices & Considerations

More data at the edge means an increased need for real-time automated decisions

- Limited connectivity and bandwidth are also challenges of edge computing. Models must be able to operate without continuous connectivity to a central server and must be able to manage data transmissions efficiently. Real-time responses are often necessary for edge computing, which requires models to process data quickly and respond rapidly to changing conditions.

- Finally, maintaining model accuracy is more difficult at the edge. Models must be able to adapt to changing conditions and continue to provide accurate results despite changing data inputs. The ability to monitor and manage model performance is critical to ensure that models continue to provide accurate results.

- These challenges make model performance management more complicated at the edge than in centralized environments. To overcome these challenges, specific strategies must be employed, including model optimization, efficient use of computing resources, and real-time monitoring of model performance.

Short feedback loops between insight creation and value realization

- Model observability at the edge provides quicker feedback loops and as a result, quicker actionability which in turn leads to improved value realization for the business.

The data at the edge is an untapped opportunity if others ignore it

- Going back to the Gartner cited at the beginning where more than 50% of enterprise-critical data will be created and processed outside the data center. The value of data and making that data work for the business is a crucial contributor to the success and so passing on the opportunity to use data generated at the end is not to be missed.

- Leveraging Edge AI solutions that can help take advantage of the data, and ML models in production at the Edge helps to realize this untapped opportunity.

Challenge 2: Going from Data to Value

Assumptions/Perception

Accurate insights and models guarantee business gains

- Accurate insights are vital for any solution however these insights do not always translate into business gains. If there are poor processes, and practices around collection, and interpreting the insights this leads to the increased time taken to make decisions.

(Insight velocity) Time to insight = (Decision velocity) Time to Value

- Getting quick insights is good but should also be combined with accurate insights as discussed above which help to not only take fast actions on model outputs but also help make those decisions more accurate as well as alignment to the business goals.

A data-centric approach to people, processes, and tools drives outcomes

- Focusing on the data output and letting it guide your decisions and people resources leads to inefficient and disjointed processes. Data is important and should help bring direction in making decisions that drive the goals of the business.

Best Practices/Considerations

Measurable Operational insights guarantee sustainable business gains

- Measurable operational insights are essential for achieving sustainable business gains. By applying insights to operational data, you can identify patterns, trends, anomalies, and opportunities that can improve the performance and efficiency of ML models at the edge.

Operationalizing insights drives decision velocity, and decision velocity drives business value

- Making insights actionable and sustainable and tied to the business goals should be at the center of the process to enable fast decision-making and actionability.

- Automating as much of the process as possible helps to reduce the time and effort required to draw value from insights.

Organizing people, processes, and tools around outcomes vs. outputs

- Involve stakeholders throughout the process as it is important for roles at all levels to help ensure that the insights are aligned with the business goals and are subsequently used to benefit the organization.

- Adopting a continuous learning approach will help ML models constantly evolve through model performance monitoring and downstream retraining as needed.

Challenge 3: Managing Risks and Rewards with AI

Assumptions/Perception

Existing tools are not ready for my special compliance needs, so I am building my own

- Building your own tools requires overhead for valuable people resources to build and also for Ops to manage and keep updated. In addition, a bespoke solution may not adapt to integration with future software investments, scaling, and/or deployment to different Edge environments. This limits the business’s ability to take full advantage of its data and ML models.

I am not ready for the tools out there

- Production ML solutions are available that integrate into existing software investments and help put ML models into production whether they be in the cloud, datacenter, or at the edge. Integration into existing tools and software also helps ML Practitioners work in familiar environments with little to no downtime and delay for training.

Prioritizing only no-risk initiatives for small or unknown rewards

- Spending time, resources, and energy on projects that are not aligned with the business goals will not help drive the valuable model and people learnings needed to bring about short and long-term success.

Best Practices/Considerations

Does building your own tool give you the first mover advantage or the best mover advantage against your competition?

- There is a strong argument that building your own tool does NOT give you an advantage against your competition. With the speed at which the AI and ML space is moving lengthy delays creating custom tooling for getting your models into production and working for the business puts your father behind the pack.

- Adopting ML Production solutions that integrate into your current toolset help bring flexibility to work and deploy models to production regardless of whether that model resides in the cloud, in a data center, or at the Edge.

Partnership and joint collaboration mindset with vendors

- Leveraging the skills and experience of vendors in the ML production space for Edge AI deployments helps you benefit from the innovations that they develop to help the future growth and scale of your Edge AI deployments.

- You also benefit from owning the whole MLOps lifecycle tools across deploying, running, managing, monitoring, and observing AI at the Edge.

De-risk high-reward initiatives with small experiments and incremental learnings

- Starting with smaller models will help drive informed learnings not only for model performance but will also help develop best practices for MLOps across deploying, running, monitoring, optimization, and management of ML models at the edge. Through developing strong MLOps processes you will be positioned well to scale with minimal change and upheaval to your entire ML production environment.

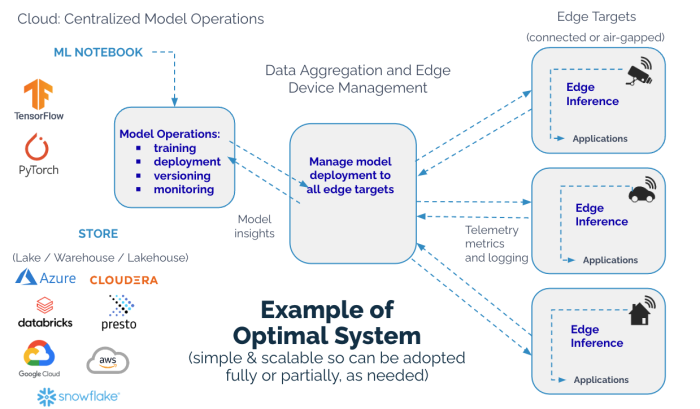

Wallaroo’s unique deployment architecture is designed specifically for implementing machine learning models at the edge. With its ability to facilitate feedback loops between edge devices and cloud infrastructure, Wallaroo’s approach enables enterprises to achieve optimized edge computing and simplify model deployment while ensuring efficient and effective ML models. This means that enterprises can make the most of their ML investments and achieve improved operational efficiency, making it an ideal platform for deploying models at the edge.

Wallaroo includes edge-to-cloud capabilities like automatic model scaling based on resource availability and real-time monitoring of model performance. These features are specifically designed to address the unique challenges of managing machine learning models at the edge.

You can deploy your own edge simulation using the Edge AI Tutorial and Wallaroo Community Edition as well as other tutorials to build your skills at these links.

Edge AI Simulation: https://hubs.la/Q01SHSkJ0

Wallaroo Community Edition: https://hubs.la/Q01SHRw30

Wallaroo Tutorials: https://hubs.la/Q01SHW9b0

.png)