MLOps has become a popular term in the context of machine learning pipelines

MLOps has become a popular term in the context of machine learning pipelines. It refers to the various operations involved, from building models to deploying them in real-world settings. Its goal is to ensure that machine learning processes are reproducible, reliable and capable of adapting to changes in data. This includes tasks such as continuous integration, training and development.

LLMOps, on the other hand, focuses on the operationalization of Large Language Model (LLM) pipelines. While LLMs also represent a type of machine learning model, LLMOps involves rethinking and reworking strategies to facilitate the process of handling LLM pipelines.

What is LLMOps?

The rise of LLMs and their increasing deployment require sound operational practices for their implementation. Models that understand and generate text, find applications in various tasks, including summarization, AI assistants and search functions. The recent surge in Large Multimodal Models (LMMs), such as ChatGPT, has added further diversity to the LLM landscape. LMMs combine various forms of media with language to enable creation of advanced applications. Who knows? Perhaps we’ll witness the emergence of LMMOps soon enough!

While it shares many similarities with MLOps, LLMOps does face unique challenges in terms of building and deploying LLMs.

LLMOps vs. MLOps

LLMOps = MLOps + LLMs

LLMOps advocate practices for the use of LLMs. Here are some key ways LLMOps diverges from MLOps:

Computational resources

LLMs usually demand more computational resources than traditional ML models, both for training and inference. Other ML models may sometimes require equivalent resources, but typically LLMs require more computational resources to work on large datasets. (The “L” in LLM denotes LARGE for a reason. 🙃) To manage these resources effectively, techniques like quantization and sharding become essential.

Prompt versioning

Prompts are a common way to guide models toward desired outputs. Prompt engineering strategy is particularly effective for instruction-tuned LLMs because it enables the use of specific prompts (sometimes with added context) to direct the generation of outputs. Managing prompt versions becomes critical to monitor the progression of prompts and their corresponding outputs. This ensures you can trace which prompt resulted in which output so they can select the most effective prompt for the model.

Human-in-the-loop

In both LLM and ML pipelines, involving human input is vital. However, it’s even more critical for LLMs, as reinforcement learning with human feedback (RLHF) has taken the forefront in this context. RLHF in LLMs has been proven to be the most effective with respect to alignment with human values. This approach enhances the reliability of LLM outputs by training a reward model that integrates human preferences. Therefore, LLMOps pipelines can involve integrating feedback loops to evaluate predictions and gather data for refining LLMs further.

Fine-tuning and in-context learning

Many LLMs are typically fine-tuned versions of foundational models (unlike ML models, which are generally trained from scratch). Fine-tuning requires extensive effort in data preparation, followed by fine-tuning the model using that data. The focus is on preparing data preparation and ensuring its quality rather than training.In-context learning involves providing additional context in the prompt so pre-trained LLMs can assimilate new knowledge. It involves storing and retrieving embeddings from vector databases and calls to external services. LangChain is a popular tool for in-context learning and prompt engineering that allows you to build LLM pipelines on top of foundational models.

Hyperparameter tuning

Adjusting hyperparameters in LLMs results in notable shifts in the cost and compute of training and inference, whereas in ML models, tuning is done to enhance model accuracy.

Why does LLMOps matter?

LLMs are different from other ML models for the reasons we’ve discussed, and LLM development life cycles differ slightly from those of traditional ML. An LLM pipeline includes all the typical steps of an ML pipeline, but it requires more computing power and the ability to handle complex workloads at a larger scale. LLMOps entails a continuous cycle of iteration, training and deployment of models. This process aims not only to enhance performance but also to manage the costs associated with the training and deployment of these models.

Components of LLMOps

Here’s what LLMOps encompasses:

Data management

Building an effective LLM depends on the quality of its data. Fine-tuning or introducing additional context requires you to ensure that the dataset aligns with the desired output from the LLM. Data from diverse sources contributes to a well-rounded LLM. Since raw data can be noisy and unstructured, building a high-quality dataset involves deduplication and outlier removal. Control of data versions is equally vital for managing changes and monitoring the evolution of the dataset. In addition, leveraging human-in-the-loop techniques, such as RLHF, proves valuable in validating the quality of the LLM’s responses.

Model development

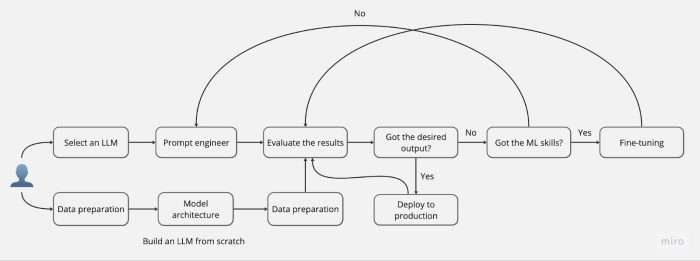

Increased availability of LLMs in both closed- and open-source domains across various fields means you must assess which model aligns best with your specific use case. Factors such as the problem domain, computational complexity and the availability of relevant data play pivotal roles in model selection.

With pretrained models widely available, starting with fine-tuning is often a good choice. Alternatively, prompt engineering can also be effective without the need for model retraining.After model preparation, standard benchmarks like GLUE, SQuAD and SNLI can be employed for evaluation. Keep in mind that these benchmarks may not cover all aspects of LLM evaluation, so you may need to adjust your approach based on your specific use case and dataset.

Model deployment

Serving LLMs usually demands GPU resources, although CPU inference remains an option. However, for larger models, leveraging GPUs is the preferred approach. Deploying the model can be done on-premises or in the cloud. Implementing CI/CD practices becomes crucial for a streamlined deployment process, ensuring smooth updates to the model with minimal disruption to users. Additionally, scaling the models, either up or out, might be necessary, and platforms like Kubernetes and serverless architectures prove valuable for this purpose.

Model monitoring

After deploying the model, continuous monitoring becomes imperative to identify model drift or any potential issues that may arise from changes in data distribution. Regularly monitoring and updating the model accordingly are essential to keep the LLM up to date.

What are the benefits of LLMOps?

Here’s how LLMOps can be beneficial:

- Scalability: LLMOps facilitates the scalable deployment of models to accommodate fluctuating workloads and user requests

- Computationally efficient: LLMOps prioritizes running computationally efficient workloads through optimizations and quantization strategies, helping to reduce the costs associated with training and deployment

- Human feedback: While not mandatory, human feedback has proven to enhance LLM performance. LLMOps accommodates this by enabling seamless integration of human feedback into LLM pipelines

LLMOps platform

An LLMOps platform empowers ML practitioners and data scientists to build LLM pipelines with ease.As an LLMOps platform, Flyte offers features such as versioning, team collaboration, caching, checkpointing and code-level resource allocation. While online serving is not currently available, Flyte supports scalable training and deployment of models. Users can seamlessly fine-tune LLMs with declarative infrastructure, utilize the LangChain integration for running LangChain experiments with observability, and engage in prompt engineering while tracking all iterations. Try Flyte.

Additional resources

How we built FlyteGPT with LangChain

Fine-tune Llama 2 with limited resources

Fine-tuning vs. prompt engineering LLMs

The three virtues of MLOps: velocity, validation and versioning

.png)