This article ( original blog ) is written by the David Burchmann , he is currently the VP of engineering at Ntropy

This article ( original blog ) is written by the David Burchmann, he is currently the VP of engineering at Ntropy.

This blog post explains our journey in MLOps, describing how we leveraged the combination of open-source technologies and cloud providers to simultaneously lower our training costs by >8x, and decreasing model training time by 4x.

With machine learning being at the core of our company, we’ve found that minimizing training costs, eliminating developer overhead,and organizing experiments is extremely important. Over the last decade, as the complexity of building, deploying, and maintaining large machine learning applications has grown, so too has the importance of a new field, MLOps, dedicated to solving these challenges.

The Challenge

Given the importance of ML to our product, iteration speed, and the experimental nature of our task, we had several requirements that needed to be met. So what infrastructure problems did we need to solve?

- Jupyter notebook support: we wanted a way to fire up notebooks at any time, and still be able to continue from where we left off previously.

- Long running training jobs: we wanted to have a queue of training jobs that would run one after another, so that we could fire off a job and receive a trained model at some point in the future. We also wanted to be able to run multiple training jobs in parallel.

- Model reproducibility: Making tweaks to old models, retraining them, and comparing models should be easy and consistent. We also wanted to increase our bus factor.

With the requirements stated, let’s start from the beginning.

History: AWS

Our first infrastructure solution was to fire up a p3.8xlarge or p3.16xlarge instance on AWS for each ML engineer to use. The “MLops” overhead was very low and usage was flexible.

Un-opinionated and simple.

Running multiple experiments or hyperparameter searches in parallel was not really possible with this setup. Without proper separation of jobs, we were hitting race conditions in the NVIDIA drivers (kernel panics). As the team grew, the cost scaled linearly. What’s worse, most instances were often idle.

Reproducibility

Inevitably, models became stale, and we needed to retrain on new data. Unfortunately, our code became stale too. We were not consistently checking notebooks into CI, and asynchronization between training and inference code led to many hours of manual work.

We needed reproducibility.

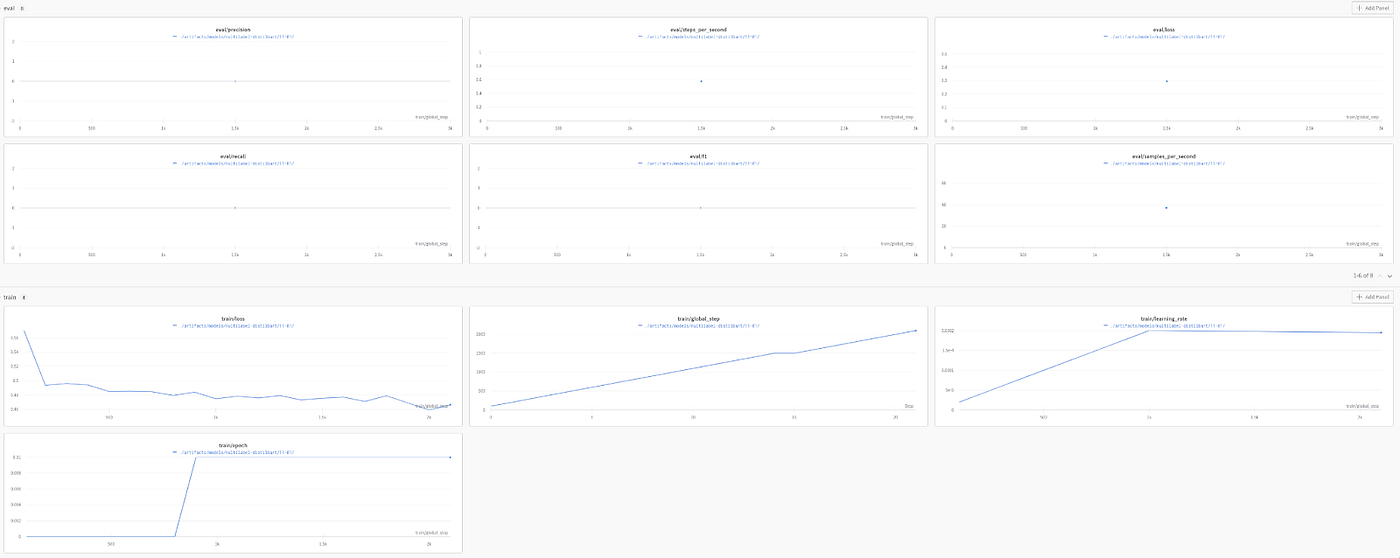

To solve this, we began using Weights & Biases as a way to track our data, training scripts, and metrics, which we liked and are still using. The service is very lightweight and customizable and fits our use-case very well.

Idling instances: AWS Spot Instances

We quickly realized our EC2 instances were idle 75% of the time, and even when active, were nowhere near 100% utilization. We started to experiment with spot instances. We hypothesized that if we set our max spot price to a number larger than the on demand price, then Amazon’s scheduling algorithm would prioritize us. Unfortunately, that was not the case. After repeatedly requesting multi-gpu spot instances for a week with no success, we tried requesting single-gpu instances and received one after several hours; but it was terminated about 15 minutes later. Spot pricing was not the solution (at least until the chip shortage ends).

Idling instances: Sagemaker

Next, we explored sagemaker. We thought that with its support for hosted notebooks (Sagemaker Studio), and its training jobs would be a great choice for us. At first glance, it seemed very promising. Although it costs 10–20% more than on-demand instances, instances won’t unintentionally idle, which would eliminate the main cost associated with regular EC2 instances..

The downside is that Sagemaker Studio hosted notebooks are very rigid; you can’t install plugins. This was a dealbreaker for some of our ML engineers who had customizedsetups.

To further complicate things, the chip shortage made using Sagemaker much harder. It could take several days to restart instances. As a result, we ended up idling our instances to prevent this, bringing us right back to where we started, but now with an additional 10–20% cost increase per instance.

Since there were so many issues with Sagemaker we decided not to stay with it.

Do we need V100+ for training transformer models?

GCP and Azure were also affected by the chip shortage, and wouldn’t give us the quota to fire up any GPU instances. Linode stuck out as a cheap alternative. Although they only provided Quadro RTX 6000 GPUs, we found out that training times were similar. By using RTX 6000s with 24GB RAM we had 50% more examples per iteration than with the 16 GB V100s — all despite the V100s having 11% more tensor cores than the RTX6000s. This difference in memory was enough to make training runs take approximately the same amount of time on RTX6000s as they did on V100s.

With Linode we could get cost savings, but the chip shortage was back with a vengeance. Linode struggled to provision nodes with more than three RTX6000 GPUs. To solve this, we launched some shared 3xGPU instances and created a simple job execution script that would run jobs placed on a FIFO queue. We liked this approach, but wanted support for notebooks as well.

Kubeflow

Kubeflow is an MLOps tool that supports running notebooks, tensorboards, training jobs, hyperparameter searches, and a lot of other cool things. It runs on kubernetes (K8s), and leans heavily on K8s for a lot of its features.

Running K8s on GPU nodes in Linode is not natively supported via Linode Kubernetes engine. To run on GPU nodes we needed to run our own K8s cluster with on-demand instances. We found a nice Terraform module that allowed us to fire up a K8s cluster with Linode using on-demand instances via kubeadm.

This solution still cost us roughly the same amount, at just over 1000$ / GPU per month, but now we had support for notebooks, training runs, and better reproducibility. This solution solved all our problems at less than a quarter the cost per GPU of AWS.

GCP

This solution was working great, but as time went on, and GCP improved their A100 fleet, we got our quota for A100 instances filled, and so we decided to give that another go. We set up kubeflow by following the standard GCP tutorial, using preemptible A100 nodes (0.88$ / GPU / hour — or half the price of linode). This worked a charm, and with the A100 being a faster GPU with 40GB VRAM, we were able to get up to 4x faster training jobs than on Linode due to increased batch sizes.

Our AWS experience had made us skeptical about preemptibles, but we observed hardly any node shutdowns (specifically about one shutdown every 50 jobs). That’s a pretty good trade-off for a total ⅛ the cost of our original solution!

This setup had all the advantages of K8s on Linode, but with 4x faster GPUs and auto scaling groups, which shut down unused nodes. The total cost reduction now stood at more than 50% compared to linode, for the same hours of compute, and just 12.5% the cost of AWS.

As the cost per flop is decreasing exponentially with time, we’ve found that the latest and greatest GPUs are often the cheapest solution to train and iterate on ML models, if the compute and memory can be fully utilized.

Buying GPUs

Until now, we have discussed only cloud solutions. But, what about buying your own infrastructure? The following tables summarize the costs of running 8 A100 GPUs and tries to highlight some of the less obvious costs of buying GPUs:

GCP Costs

- 8xA100 nodes $70,000 / yr

Roll your own costs

Newest GPUs are bought every 12 months due to 12x increase in training data every year:

- 8xA100 rack with Linode specs: $ 150,000

- Return for selling old hardware (30%) $ -45,000

- Co-location, bandwidth, power bills $ 3000 / yr

- Hardware failures 1 GPUs / 2 year: $ 15,000 / yr

- On call team (1 employee) $ 100,000 / yr

Total = $268,000 first year, 118,000$ next year, 73,000$ third year = 153,000$ average cost per year

Accounting for hidden costs, it’s $83k / yr cheaper to use GCP. If you buy enough of these servers, you get a bulk discount (up to about 15%), and the hidden costs scales sublinearly with the number of servers. In practice, once you reach 5 servers rolling your own hardware ends up being cheaper than GCP.

Roll your own costs for 6 servers

- 6 x 8 A100 rack with Linode specs (15% discount): $ 765,000

- Return for selling old hardware (30%) $ -270,000

- Co-location, bandwidth, power bills $ 18000 / yr

- Hardware failures 1 GPU / server / year: $ 90,000 /yr

- On call team (1 employee) $ 100,000 / yr

GCP: 420k$ / year

On-prem: 0.973M$ first year, 208k$ second year, -65k$ third year = 372k average cost per year

And btw, we’re hiring!

.png)