Generative AI (GenAI) is having a moment

Generative AI (GenAI) is having a moment. In just the past few months, diffusion and large language models have revolutionized the field of machine learning. From creating realistic images to generating human-like text, not a month goes by where there isn’t a new, powerful model. We’ve come a long way from the avocado chair of the first DALL-E.

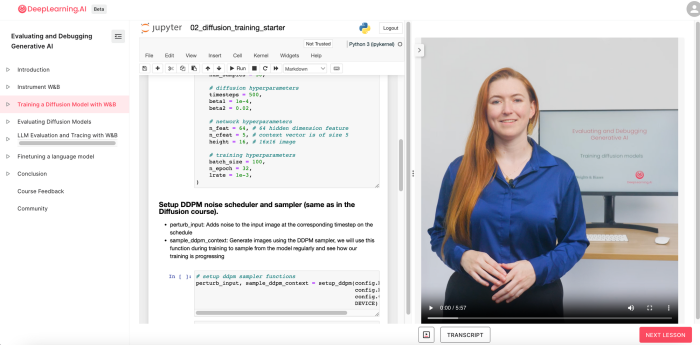

However, training and evaluating these models can be quite complex. That’s why DeepLearning.AI, in collaboration with Weights & Biases, has launched a new course **Evaluating and Debugging Generative AI**. In this blog post, we’ll give you a sneak peek into the second lesson of the course, taught by the Carey Phelps, founding Product Manager at Weights & Biases.

Specifically, we’ll learn about diffusion models, how they’re trained, and how to evaluate them using best-in-class tools.

The Magic of Diffusion Models

Diffusion models are denoising models, where the primary task of the model is not to generate images, but to remove noise from images. The model is trained by adding noise to images and forcing it to predict the noise present on the image.

If you are interested in Diffusion Model we recommend checking out our Guide to Using Stable Diffusion XL with HuggingFace Diffusers and W&B.

During the training phase, we’ll monitor relevant metrics like the loss curve, but there’s an interesting twist. The loss curve tends to flatten out quite early, which could mislead you into thinking that your model is fully trained. However, by sampling from the model regularly during training, we observe that the quality of generated images continues to improve despite a plateau in loss. Meaning it’s crucial to log not only the loss but also the samples at regular intervals.

Hands-on with Weights & Biases

One of the highlights of the course is the integration with Weights & Biases, a powerful platform for experiment tracking, dataset versioning, and model management. We train our diffusion model on the sprites dataset (Fruits&Veg + Game Icons) using the training notebook from the DeepLearning.AIcourse and log the results to Weights & Biases.

Let’s take a look at some of the steps involved. Here is the code snippet you will be using in the lesson after setting everything up.

# create a wandb run

run = wandb.init(project="dlai_sprite_diffusion",

job_type="train",

config=config)

# we pass the config back from W&B

config = wandb.config

for ep in tqdm(range(config.n_epoch), leave=True, total=config.n_epoch):

# set into train mode

nn_model.train()

optim.param_groups[0]['lr'] = config.lrate*(1-ep/config.n_epoch)

pbar = tqdm(dataloader, leave=False)

for x, c in pbar: # x: images c: context

optim.zero_grad()

x = x.to(DEVICE)

c = c.to(DEVICE)

context_mask = torch.bernoulli(torch.zeros(c.shape[0]) + 0.8).to(DEVICE)

c = c * context_mask.unsqueeze(-1)

noise = torch.randn_like(x)

t = torch.randint(1, config.timesteps + 1, (x.shape[0],)).to(DEVICE)

x_pert = perturb_input(x, t, noise)

pred_noise = nn_model(x_pert, t / config.timesteps, c=c)

loss = F.mse_loss(pred_noise, noise)

loss.backward()

optim.step()

wandb.log({"loss": loss.item(),

"lr": optim.param_groups[0]['lr'],

"epoch": ep})

# save model periodically

if ep%4==0 or ep == int(config.n_epoch-1):

nn_model.eval()

ckpt_file = SAVE_DIR/f"context_model.pth"

torch.save(nn_model.state_dict(), ckpt_file)

artifact_name = f"{wandb.run.id}_context_model"

at = wandb.Artifact(artifact_name, type="model")

at.add_file(ckpt_file)

wandb.log_artifact(at, aliases=[f"epoch_{ep}"])

samples, _ = sample_ddpm_context(nn_model,

noises,

ctx_vector[:config.num_samples])

wandb.log({

"train_samples": [

wandb.Image(img) for img in samples.split(1)

]})

# finish W&B run

wandb.finish()That code initiates a Weights & Biases run for our training process and logs the loss, learning rate, and the current epoch at each iteration. We also log the image samples generated at the end of each epoch, allowing us to track the model’s progression visually.

Sign up for the free course and run the code alongside the lessons in the new DeepLearning.AI platform!

Diving Deeper into Training a Diffusion Model

When we train our diffusion model, we set up a training loop. At the start, we initialize a Weights & Biases run to keep track of the training. As the training proceeds, we log the loss, learning rate, and current epoch. Here, the model checkpoints are saved every four epochs, providing a snapshot of the model’s state at that point in time.

Still: the most rewarding part is observing the model’s progress visually. The initially noisy and grainy images generated by the model become clearer and more recognizable as training progresses. This logged in your Weights & Biases workspace where you can view the image generations in real time. You can visit the result of the code from this lesson’s training here!

Finally, once our model starts generating high-quality images, we make it available for the rest of the team using the Model Registry feature in Weights & Biases. This allows team members to view all the best model versions, the lineage of the model, and get back to the training run that produced the model.

Key Takeaways

The “Evaluating and Debugging Generative AI” course offers a blend of theory and hands-on experience. Lesson two focuses on the training part, sign up for the course today and practice:

- Managing hyperparameter config

- Collecting artifacts for dataset and model versioning

- Loging experiment results

- Tracing prompts and responses to LLMs over time in complex interactions

.png)